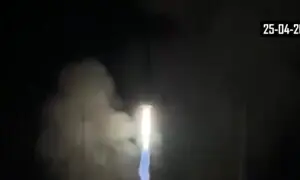

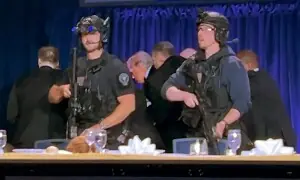

LAST month, the early hours of May 10 witnessed Indian strikes on the Nur Khan Airbase. Moments later, X erupted with AI-generated combat footage, doctored satellite images and deepfake videos of mass destruction. Within hours, the borderless digital arena became the primary theatre of war, marked by weaponisation of AI by Indian mainstream and social media.

As Elizabeth Threlkeld of the Stimson Centre observed at the time, “the media ecosystems are really emphasising fake news in many cases”. And indeed, by midday, international news outlets had picked up the narrative. Disinformation metastasized. Our official response was piecemeal. Had it not been for the thousands of citizen activists on social media platforms, the outcomes would have been quite different.

A clear lesson emerged from these events: AI is now a national security issue, fundamentally strategic in nature, and no longer simply administrative. If an adversarial actor can inject synthetic content into our information ecosystems faster than we can verify it, then even the most resilient institutions are undermined. Influence in the digital age has become a function of speed. And AI accelerates the speed of confusion and engineered chaos.

Pakistan’s national AI and digital policies are not designed for such crises. They have no provisions for response protocol during conflict-induced narrative attacks. And, critically, no institutional architecture to coordinate civilian, defence and media responses to digital conflict.

Without adequate safeguards, Pakistan faces coordinated AI attacks.

This leaves us vulnerable as our adversaries advance. India continues integrating AI into its ISR (Intelligence, Surveillance, and Reconnaissance) systems, deploying it across military planning, strategic communications and media manipulation capabilities.

Without adequate safeguards, Pakistan faces a nightmare scenario: coordinated AI attacks. Imagine AI systems spreading fake reports of wartime casualties to demoralise the public, while simultaneously pushing tailored disinformation to manipulate political sentiment, inflaming sectarian tensions in vulnerable communities, and manipulating financial markets to trigger market panic. All happening at once, faster than any human response team could counter. These are just a subset of the total AI-enabled attack vectors Pakistan could face and for which no effective guardrails exist in our current policy frameworks.

We need to act fast. Treating this as a national security issue, a systems-based approach must be adopted to counter AI-enabled information warfare. This rests on three core pillars: institutional response infrastructure, technical capabilities and human capacities.

The first pillar requires a ‘National AI and Information Warfare Response Unit’ that brings together AI experts, civilian and military intelligence officers, cyberwarfare specialists, and media analysts under a unified command. When the next crisis hits, this unit would immediately coordinate with the foreign ministry, military public relations (ISPR), intelligence agencies and telecom companies to counter false information at the speed it spreads. This unit would also work with our diplomats abroad to ensure Pakistan’s narrative reaches international audiences before our enemies’ lies do.

The second pillar requires technical capabilities that rebuild public trust and help them instantly distinguish fact from fiction. They must include traceability protocols (digital fingerprints that show where content came from) and government systems that automatically flag doctored content in real-time, proactively spotting and neutralising false information before it goes viral.

The third pillar focuses on building human capacities to question suspicious content, verify sources and resist psychological manipulation. It requires mainstreaming digital and AI literacy. Students, journalists, diplomats, civil servants, military soldiers and officers all need training to recognise AI manipulation. When everyone can spot fake news, the entire nation becomes harder to deceive.

The bottom line is that through the India-Pakistan conflict of May 2025, we found ourselves in times where lies travelled faster than the truth. And unless our verification systems match the velocity of disinformation, we will always be losing. These three foundational elements can help us fight back at the speed of modern warfare. In the next crisis, truth won’t go viral on its own. It will have to be built ahead of the chaos.

The writer is a strategic adviser with experience in public policy, digital governance, and institutional transformation. He is a certified director, Fulbright scholar, and a former consultant to the Prime Minister’s Office and the World Bank.

Published in Dawn, June 23rd, 2025