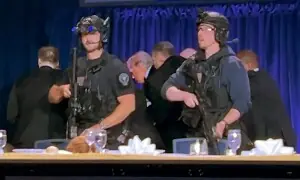

London police started using facial recognition cameras on Tuesday to automatically scan for wanted people, as authorities adopt the controversial technology that has raised concerns about increased surveillance and erosion of privacy.

Surveillance cameras mounted on a blue police van monitored people coming out of a shopping centre in Stratford, in east London. Signs warned that police were using the technology to find people wanted for serious crimes. Officers stood nearby, explaining to passers-by how the system works.

It's the first time London's Metropolitan Police Service has used live facial recognition cameras in an operational deployment since carrying out a series of trials that ended last year.

London police are using the technology despite warnings from rights groups, lawmakers and independent experts about a lack of accuracy and bias in the system and the erosion of privacy. Activists fear it's just the start of expanded surveillance.

"We don't accept this. This isn't what you do in a democracy. You don't scan people's faces with cameras. This is something you do in China, not in the UK,” said Silkie Carlo, director of privacy campaign group Big Brother Watch.

Britain has a strong tradition of upholding civil liberties and of not allowing police to arbitrarily stop and identify people, she said. "This technology just sweeps all of that away."

Police Commander Mark McEwan downplayed concerns about the machines being unaccountable. Even if the computer picks someone out of a crowd, the final decision on whether to investigate further is made by an officer on the ground, he said.

"This is a prompt to them that that's somebody we may want to engage with and identify," he said.

London's system uses technology from Japan's NEC to scan faces in the crowds to see if they matched any on a watchlist of 5,000 faces created specifically for Tuesday's operation.

The watchlist images are mainly of people wanted by the police or courts for serious crimes like attempted murder, said McEwan.

London police say that in trials, the technology correctly identified 7 in 10 wanted people who walked by the camera while the error rate was 1 in 1,000 people. But an independent review found only eight of 42 matches were verified as correct.

Police are "using the latest most up-to-date algorithm we can get,” McEwan said. "We're content that it has been independently tested around bias and for accuracy. It's the most accurate technology available to us."